How do you optimize a system by a factor of 100, when you already built it the best you knew how?

In recent years, we’ve found ourselves in conversations with potential customers who would love to use our product, but they generated so much log data that we couldn’t handle their scale. When your company name is “Scalyr”, this is embarrassing.

It’s not hard to see where all the data is coming from. Modern architectures generate staggering volumes of logs. Microservices, Kubernetes, serverless – every major trend increases the number of components, and therefore the number of logs. That trend is not going anywhere but up. As architectures become more complex, log volume increases – and so does the need to analyze that log data to understand and troubleshoot application issues.

This has led to a world with two types of log management solutions: the ones no one can afford, and the ones no one can use – because they’re too slow, unreliable, or just plain can’t scale. Companies are operating from a position of data scarcity, unable to retain all of the information they need.

We’re in the business of providing insights based on log data. We’re big believers in realtime ingestion (providing an up-to-the-moment view) and interactive performance (processing queries in less than one second, so engineers can get into a flow state during an investigation). We built our product on a unique architecture that combines high performance, a low-overhead index-free design, and massively parallel processing, to deliver this experience at an affordable price. But when customers started coming to us with hundreds or thousands of terabytes per day, we had to turn them away.

Everyone on the team agreed that this must not stand. To get ahead of the curve, we’d have to improve the scalability of our solution by at least one, and preferably two, orders of magnitude. Considering that the existing system was already the product of years of effort, was this even possible? To highlight the technical challenge – akin to the first attempt to break the sound barrier – we code-named the project Sonic Boom.

As it turned out, breaking the cost/performance barrier was possible. Today, we’re excited to announce our next-generation SaaS log management solution, designed to drastically lower costs, scale to petabyte-per-day log volumes, all while maintaining interactive performance. Getting there required a fundamental change to our architecture, but at least as important are a thousand individual improvements – “little breakthroughs” – to make every aspect of the system more efficient. In this post, I’ll talk about how to approach a multiple-order-of-magnitude design challenge.

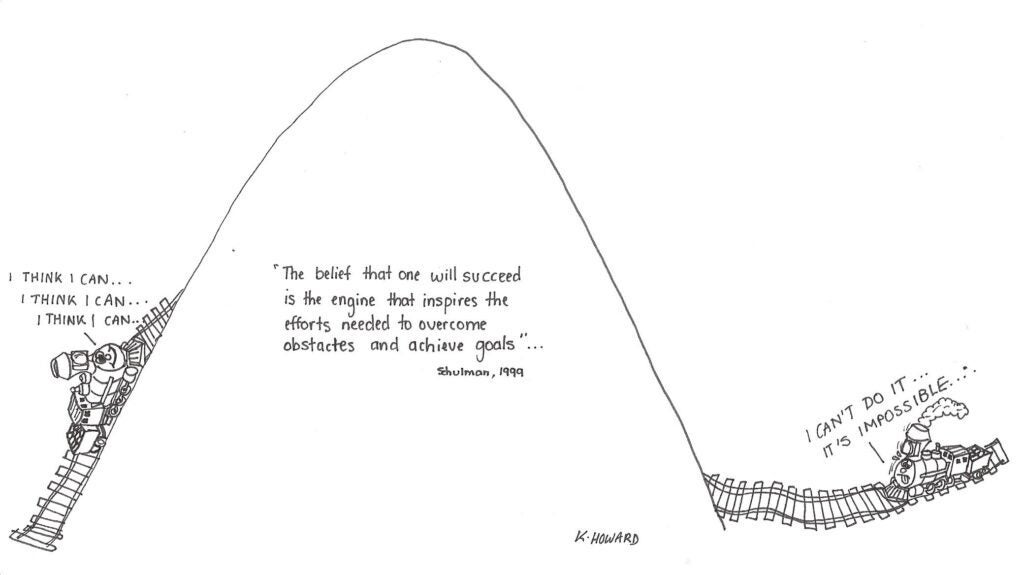

The first step is to believe

A basic requirement for any large undertaking is to believe you can do it. This may sound trite, but belief has two important effects:

- You will start to look for solutions that otherwise you might not have explored.

- You will whittle away at obstacles. Without faith, you might think “there’s no point in fixing X, because we’re blocked on Y anyway”. If you believe Y might have a solution, you’ll be motivated to take care of X. And then sometimes Y turns out to not be such a roadblock after all.

A well-known example of this phenomenon is the four-minute mile. For decades, runners had been trying – and failing – to run a mile in four minutes. In 1954, Roger Bannister finally did it, under less-than-ideal conditions to boot (cold day, wet track, small crowd). Once people knew it was possible, it took less than two months for Bannister’s new record to be beaten. The following year, three runners broke 4:00 in a single race.

This example can also be used to highlight the limits of the “first, believe” approach. Today’s world record for the mile is 3 minutes 43 seconds. If you believe, truly believe, you can be the first person to run a three-minute mile… it’s not going to happen. The feat you’re attempting has to be physically possible.

Analyze from first principles

Richard Feynman: There’s plenty of room at the bottom’

To decide whether your goal is possible, start by identifying the basic physical principles that determine the limits of what can be achieved.

In 1959, Nobel Prize winning physicist Richard Feynman gave a talk, “There’s Plenty of Room at the Bottom”, analyzing the limits of miniaturization. Working from first principles, he proposed the idea of direct manipulation of individual atoms – in other words, nanotech.

For log management, the theoretical limit of scalability – the intersection of data volume, performance, and cost – is determined by storage costs. There is always room for a cleverer way to organize and search data, but there’s no getting around the need for storage.

In our universe, speed of light is S3, not c

Previously, we stored logs on EC2 i3 instances, which have large SSD drives. This design provides high-speed access to large volumes of data, and served us well for many years. We make efficient use of storage, because our architecture delivers high performance without indexes. But even so, at hundred-terabyte-per-day scale, costs become unreasonable.

Best case, with 2x replication (plus a backup), storing 1 GB for one month on an i3 costs about $0.25. S3 is an order of magnitude more cost effective. And S3 charges only for the exact amount of data you’re storing at any given moment; when managing your own instance storage, you wind up adding overhead for capacity management, filesystem free space, imperfect reservation management, and other factors. This can easily exceed a factor of two.

For our purposes, cloud object storage is pretty close to a basic physical limit. It is difficult to beat the combination of scalability, reliability, performance, and cost offered by S3. Starting with known parameters such as the cost of S3 and the compressibility of log files, we were able to determine that, in principle, a 100x improvement in efficiency should be possible. We were aspiring to run a mile in four minutes, not three.

“Possible” does not mean “easy”

Having determined that our goal was physically possible, we had to set about eliminating all of the specific factors that stood in the way. This was a multi-year process, and required rethinking every aspect of our architecture, relentlessly carving away every component that wouldn’t scale or added cost. We needed to make enormous investments in areas such as caching, data layout, query planning, and workload management. Every part of the system had to be rearchitected, reimplemented, benchmarked, optimized, and tuned.

For instance: we’ve built a multi-level caching system. Over 99% of the time, when one of our users issues a search, the data is somewhere in the cache. But when it isn’t, we need to fetch data from S3, and we want those queries to be fast too. That means we sometimes need to fetch terabytes of data from S3 at, hopefully, interactive speeds.

Our original architecture relies on massive brute force: for short periods, we’re able to devote our entire compute cluster to a single query. That translates well to the new design. Remember that breakthroughs often require identifying the ultimate physical limits of your problem, and then designing a system that approaches those limits. In this case, the fastest possible way to retrieve data from S3 would be as follows:

- Use every node in our compute cluster to retrieve data in parallel.

- Fully saturate the network link on each node.

- Store data in compressed form, and decompress after downloading, to squeeze as much data through the network link as possible.

- Compress using an algorithm optimized for decompression speed, such that the combined CPU capacity of the node is able to keep up with the network link, leaving just enough spare capacity to search the data as it is retrieved.

And so that’s what we did. For instance, we extensively benchmarked S3 download performance, determining the optimal combination of parallel downloads and file size to maximize performance. We repeated this across multiple EC2 instance types, determining which combination of CPU capacity and network bandwidth offered the best performance-per-dollar. At one point we even implemented our own Netty-based asynchronous S3 client, though this has been superseded by the latest AWS client library.

Every aspect of the system required similar levels of optimization. What’s better than downloading a block of data at high speed? Finding it already in your cache. What’s better than finding it in your cache? Skipping that block entirely, because you’re able to use metadata to determine that it contains no matches for the query. And so on. Through multiple levels of optimization, we were able to bring down the overall system cost to match the gain of the move to S3.

S3: more than just a pretty face price

The move to S3 does more than just lower our storage costs. It allows us to scale compute up and down independently of storage, according to the amount of storage and processing power we need. As the head of our search team, John Hart, recently explained: if our largest customer called us and said they wanted to switch from 1 month retention to 12 month retention, in our previous design this would require firing up 12x more machines and thus 12x more AWS spend. In our new design, all we have to do is not delete the data for an additional 11 months.

Because our instances no longer contain persistent state, we have much more flexibility in managing them. If we want to remove an instance, we no longer need to migrate terabytes of data. We can add and remove instances according to processing needs. We can experiment with different instance types. We can leverage spot instances. And our operations team no longer needs to sweat individual instance failures.

It’s the light fixtures that get you

Based on the new architecture, we’ve developed a technical roadmap to reduce our internal cost-per-gigabyte-stored by 100x. When optimizing a system, you’re always limited by the least-optimized component. To conceptualize this, imagine that you want to reduce the cost of new home construction by 100x. One online source states that the average construction cost of a new home in the US is $281,200. The same source estimates “light fixtures and covers” at $3,150, or 1.1% of the total. This means that even if you were able to reduce all other costs to zero – foundation, framing, plumbing, electrical, roof, all built for free – the light fixtures alone would prevent you from achieving a 100x cost reduction.

To realize the full benefit of the move to S3, we needed to drastically improve every aspect of our architecture. Earlier, I described some of the things we’ve done to improve query performance. Equally important has been the effort to reduce costs. For instance, transmitting 1GB of data between EC2 instances in different zones costs $0.02 – as much as it costs to store that gigabyte in S3 for a full month! So we redesigned the high-bandwidth data flows in our architecture to eliminate AZ crossings.

This is just one of many examples. While an undertaking of this nature can be a multi-year effort, the principles are straightforward: identify every cost that would be significant at your target efficiency level, and develop a plan for addressing it. To achieve very large savings, often you need to look for ways to eliminate costs, rather than simply reducing them. For instance, rather than compressing data that was being sent across AZs, we needed to eliminate AZ crossings entirely.

Scaling spacetime, not just space

This writeup has focused on how we re-architected our system to process large data volumes at a manageable cost. But sheer data volume was not the only problem we found customers wrestling with. Some solutions are optimized for short-term, frequently-queried data; others for long-term, rarely-queried data. To work around this, engineering teams were cobbling together multiple log repositories, such as Elasticsearch, S3 + Athena, and Splunk.

This multi-tool approach causes all sorts of problems. A typical organization will have many data sources, used in many ways: monitoring, troubleshooting, compliance, trend analysis, capacity planning, ticket investigation, bug forensics, security, analytics, etc. Each has different requirements for retention, query time span, query complexity, and performance. There’s no clean dividing line, so multiple tools often means storing multiple copies of data, adding cost, and requiring users to navigate each tool’s limitations.

Scalyr was originally designed for short-lived, frequently-queried data. But to be truly scalable, a solution must address the full range of use cases. Fortunately, our new architecture lends itself to this: S3 is a great place to store infrequently-accessed data, and our caching architecture supports frequently-queried data. Ultimately, we might incorporate additional storage tiers, such as Glacier.

Operability or it didn’t happen

No matter how scalable the software and hardware components of a system, it’s also necessary to scale the human element – operations. When increasing scale by an order of magnitude, we also needed to reduce manual effort-per-node by at least that much.

Traditionally, our operations have been complicated by the presence of those i3 instances, which are highly stateful. When an instance failed, the data it contained needed to be re-replicated to a new instance.

In the new architecture, long-lived state resides in managed, reliable services such as S3 and DynamoDB. Building on that foundation, we’ve invested in making each component of our architecture self-healing. Even as we increase scale by an order of magnitude, we expect the total operational workload to decrease.

In closing: 1000 easy steps to Mach 1

Today at Scalyr, we officially launched our next-generation SaaS log management solution, designed to:

- Scale to data volumes from 1 GB/day to 1 PB/day and beyond

- Scale to use cases from short-term, high-usage to multi-year, rarely-accessed data

- Maintain performance and affordability even as data volumes explode

Modern architectures generate staggering volumes of logs. Most people think that retaining detailed event data is impossible; we believe it’s essential. To enable engineering teams to move forward with confidence, they need a rich view into the behavior of their systems. And so in a project code-named Sonic Boom, we’re increasing the scalability of our architecture – the intersection of data volume, cost, and performance – by 100x.

This has been a multi-year effort by an outstanding group of engineers. To achieve these goals, we needed to have faith that a 100x improvement in efficiency was possible, and we needed to sustain that faith through a long period of iterative design and development. A journey like this never really ends; the architecture we’ve developed will support further improvements for years to come.